Hot take: the pandemic caused event attendance to skyrocket. When work shifted remote, events followed suit, moving virtual. With that, events became more accessible than ever.

Virtual events, by definition, are accessible. They provide entrance to viewers who could not otherwise attend. But that audience has different accessibility requirements than in-person events.

For example, in a recent study, 80% of viewers aged 18-25 watch streaming content with captions. And of those, only 29% are deaf or hard of hearing. Captions are a preference regardless of ability.

Event planners have always designed in-person events with audience accessibility in mind. Faced with an explosion of online gatherings, planners must learn to use new tools to create accessible spaces. Fortunately, these tools have been in active use by broadcast professionals for many years. Once practiced, they are simple to integrate.

Here are 3 ways for event planners to improve accessibility for their virtual events in 2022.

Captioning

Captioning renders speech and other important audio content as text on screen. Captions are synchronized with content as it appears on screen.

Is Captioning Required?

Since 1973, the Rehabilitation Act has required federal agencies to leverage real-time interpretation. This also applies to any business receiving federal grants or loans. If your business received a PPP Loan or EIDL Grant due to COVID-19, your communications are supposed to be accessible.

Types of Captions

There are two types of captions: open and closed.

Open captions are overlaid on top of finished video content. Imagine watching a movie, and a few characters are speaking in a foreign language. Their dialogue would appear as subtitles at the bottom of the screen. Audience members cannot turn open captions on or off: they are a part of the content.

Closed captions, however, are transmitted as metadata. They appear on a separate layer from the content, allowing viewers to enable or disable them depending on their preference. Most video players have a small closed captions button which controls whether the captions are displayed or not.

While offering a better experience, closed captions are more complex to produce. Most modern players, such as those on YouTube and Facebook, accept caption metadata in the industry-standard CEA-608 format. Rendering occurs on dedicated hardware or software, called a caption encoder. This can make production workflows more complex.

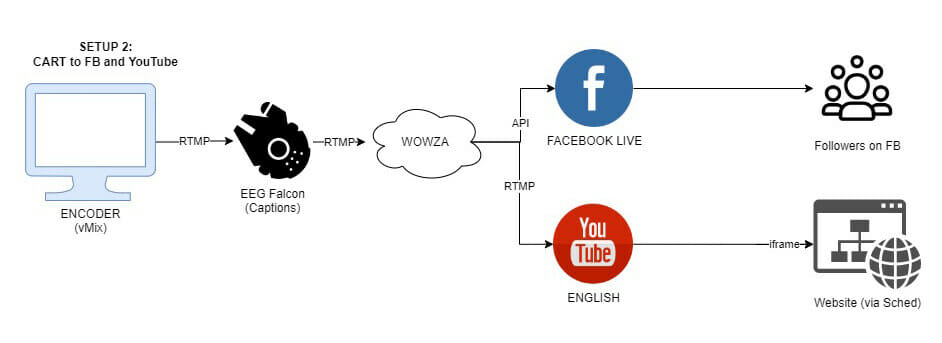

A sample signal flow that encodes captions to Facebook and YouTube simultaneously.

YouTube and Facebook both maintain up-to-date support documentation on how to incorporate captions into their platforms.

Automated vs. Manual Captions

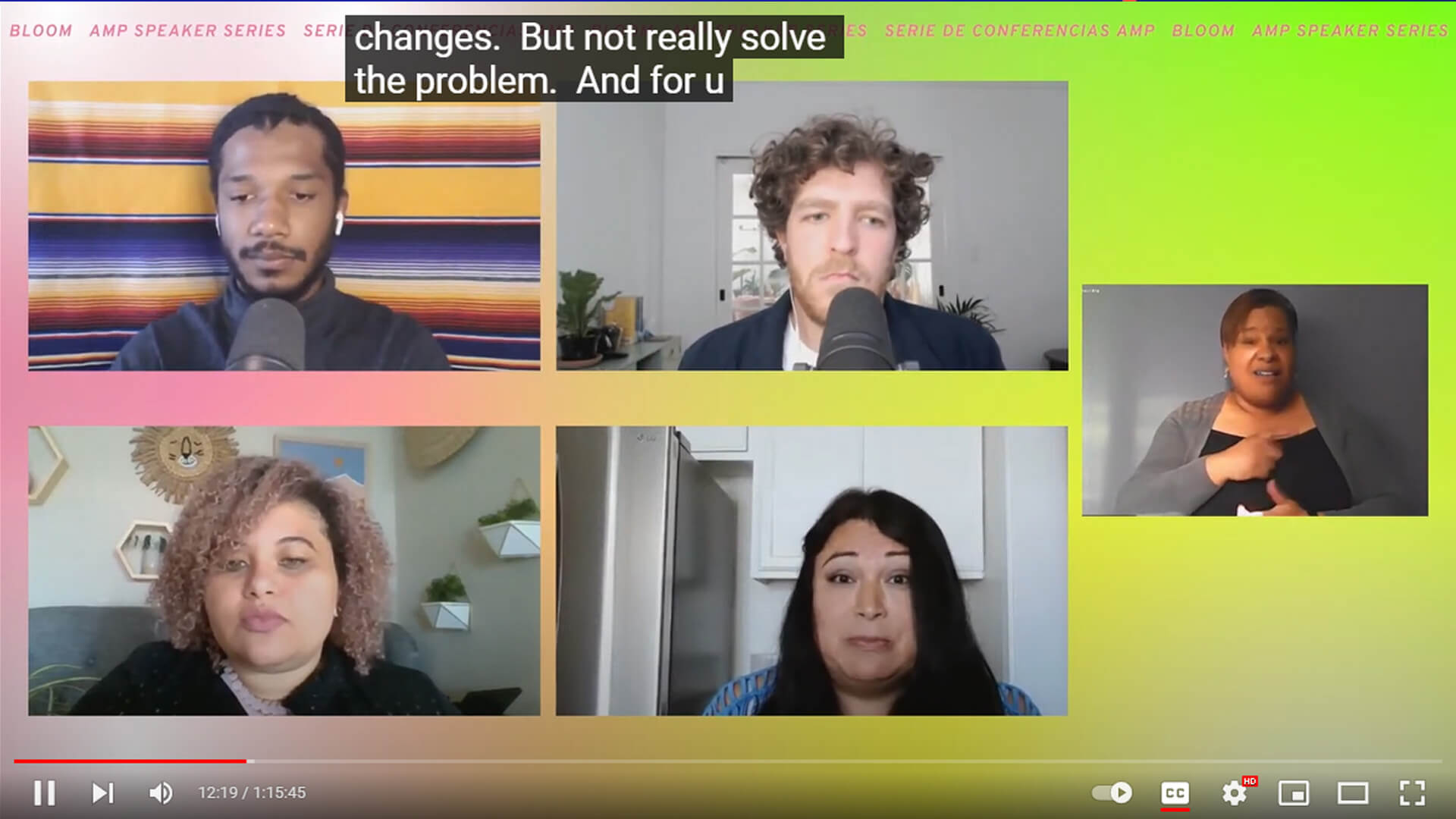

Image description: a screenshot of a virtual event with 5 people in separate boxes. At the top of the screen are closed captions. The bottom of the screen shows the video player control bar, with the captions button enabled as indicated by a thin red underline.

Captioning can come from one of two places: a robot or a human. By robots, I mean AI-powered software solutions.

Many streaming platforms have built-in automated captioning or integrate with platforms like otter.ai or Interprefy. While these options are inexpensive, they lack precision. Automated captioning accuracy in 2022 is around 85-90%. With technical or nuanced language, automation is less reliable.

Some automated captioning solutions can “learn” by allowing producers to upload a script or keywords and phrases. This can improve the service, especially when scripts feature names, acronyms, or proper nouns.

But if you want true precision, hiring a professional captioner is still the best solution. Communication Access Realtime Translation (CART) captioners’s accuracy hovers around 99%. They achieve this by reviewing materials with event planners beforehand.

Professional captioners need real-time access to the audio of your stream. Producers should add a few seconds of latency to their stream to allow time for captioners to interpret and render the feed.

Captioning Costs

Professional captioning is usually charged at an hourly rate. Platform-based automated captioning solutions may be free, while integrated solutions charge by the minute. Enhanced automated captioning solutions such as those offered by AI-Media or 3Play can cost $25 to $100 per hour. CART solutions leveraging a professional captioner can cost $100-$350 an hour depending on a variety of factors.

Language Interpretation

Language interpretation is another popular accessibility request. If your audience extends across many countries, it may be important to develop programming in several languages. Professional language interpreters can add multi-language support to any production.

Depending on platform and language, this interpretation could come as an additional caption track. Platforms that offer CEA-608 closed captions can only encode in English, Spanish, French, Portuguese, Italian, German, & Dutch due to character limitations. If a platform uses more modern CEA-708 captions, more languages are available. And of course there is no limit to language if captions are open instead of closed.

Additional language interpretation can also occur as a distinct video. This segmentation is important if your audience will chat with each other, so they can do so in their preferred language.

ASL (American Sign Language)

Image description: a screenshot of a virtual event. The title of the event, “Power In Community” is displayed at the top of the frame. In a large box on the left is the main presenter. His presentation is being interpreted by an ASL interpreter, who appears in his own box at the right side of the frame.

ASL, or American Sign Language, interprets auditory information into visual language. As they are visually appearing in the stream as talent, interpreters should be incorporated into the same pre-production process as the rest of the on-screen talent.

ASL interpreters have many unique needs to interpret a live event.

Interpreters work in teams to ensure accuracy. They also need real-time access to programming. Since most streaming platforms have a bit of delay, you’ll need to provide access to your feed in real-time. We’ve found the most efficient solution is to invite interpreters into their own Zoom room and make them co-hosts. This allows them to spotlight themselves when it’s their turn to sign.

Multi-Language Support

Image description: a screenshot of a virtual event showing 4 presenters plus an ASL interpreter.

Virtual events enable a global audience to attend your event. Adding a second or third language track can provide access to non-native speakers. Additional language support can come in many flavors. A captioner could output closed captions in Spanish, for example. Or an interpreter could translate to French instead of American Sign Language.

Like captioning, new software services powered by AI offer real-time language interpretation. Services like wordly.ai integrate directly into many virtual event platforms.

Some platforms offer viewers the option to choose their language. Zoom has a feature enabling live translation. Zoom administrators can assign interpreters to a language, giving users the choice.

Language Interpretation Costs

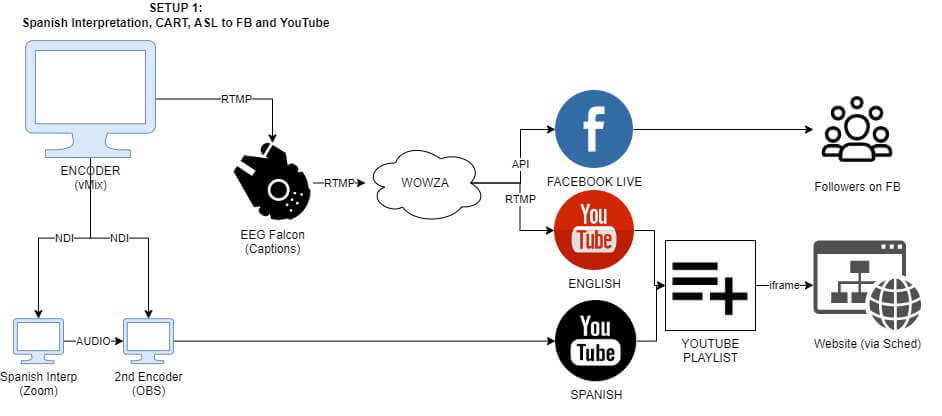

This workflow encodes live captions to Facebook and YouTube while simultaneously outputting a Spanish interpretation.

Language interpretation requires expert-level translation, technical skill, and subject matter expertise. Costs can range from $100 to $300 per hour of live show time. AI interpretation comes in at the lower end of that spectrum.

Representation

Image description: A screenshot of a virtual event, titled “National Day of Racial Healing 2021”. A panel of 12 speakers, arranged in a 4×3 grid, sits in the middle of the frame. In the bottom third of the frame are closed captions.

For audiences to connect to content, they need to see themselves represented in it. Minorities are underrepresented both on-screen and off-screen. Event planners need to ensure that they are representing a variety of cultures, genders, and ethnicities throughout their hiring practices.

When booking speakers for your event, consider the composition of your lineup. Does it reflect the diversity of your audience, or is it monochromatic? If you’re working with a speaker’s bureau to book talent, do they represent folks of all types? If not, consider reaching out to speaker’s bureaus that specialize in diverse talent.

Equally important as your on-screen talent are the folks producing the content. Ensure your hiring and vendor onboarding practices are reaching a diverse audience. Consider working with a crewing agency that specializes in diverse hiring practices. At the very least, your production company should have a diversity, equity, and inclusion statement that highlights their policies and practices. Bonus points if they offer a transparency report or can offer specifics on how they support diversity.

Conclusion

Accessible events are engaging events. Baking accessibility in from the beginning creates a more compelling viewing experience. Captioning, language interpretation, and diverse hiring practices all make events more accessible. With options existing at different price points, there are very few barriers.

One good show can change your life. By improving accessibility, event planners create more life-changing opportunities for more people.

Author Bio:

Nick Bacon, virtual and hybrid event producer, does his best work behind the scenes. An avid people-watcher, his passion for elevating the people and communities around him led him to launch the creative agency Mainstream in 2013. Based in Chicago with offices in Virginia and California, Mainstream produces hybrid, virtual, in-person, and on-screen experiences that build, strengthen, and educate communities across the country.

Compassionate, competitive, and entrepreneurial, Bacon fights for improved labor conditions, gender and racial equity, and enhanced accessibility in the media and events industries. He believes that radically transparent communication is vital to change the landscape for workers in America.

Equal parts left- and right-brained, Bacon excels in creating technical solutions to creative problems (and vice versa). He lives in Richmond, Virginia with his wife, two children, and two dogs.